The integration of artificial intelligence into enterprise operations has transitioned definitively from a phase of experimental curiosity into a foundational operating condition. By 2026, artificial intelligence is no longer an isolated capability managed exclusively by engineering departments, data scientists, or specialized technical silos; rather, it is intricately woven into the daily workflows of marketing, operations, human resources, and administrative professionals. This universal adoption has initiated a profound structural shift in the global hiring landscape. While macroeconomic headlines often focus on broader economic slowdowns, gross domestic product fluctuations, or interest rate hikes, recruitment data reveals a starkly different micro-level reality. Hiring volumes remain robust, driven by an urgent, cross-sector demand for candidates who can practically leverage AI to eliminate bottlenecks, improve decision quality, and optimize business processes.

The fundamental challenge for non-technical candidates—those without a background in software development, machine learning algorithms, or complex coding environments—is effectively demonstrating this capability to hiring managers. Traditional resumes, which rely heavily on self-reported proficiencies and bulleted lists of software tools, are increasingly viewed with skepticism by talent acquisition teams. The ability to write a basic, conversational prompt in a web interface is thoroughly commoditized. The true professional differentiator in 2026 is the capacity to engineer structured systems, enforce brand voice constraints, audit outputs for hallucinations, and generate immediately deployable business artifacts. This necessitates a permanent transition from the traditional resume to the "Applied AI Portfolio"—a documented, shareable proof-of-work that visually and structurally validates a candidate's AI literacy and systemic thinking.

This comprehensive research report investigates how non-technical professionals in 2026 are successfully architecting prompt libraries, documenting human-in-the-loop iteration, optimizing for algorithmic discoverability, and leveraging advanced prompting patterns to prove their strategic value to prospective employers, all without relying on GitHub or traditional developer portfolios.

The 2026 Talent Acquisition Landscape: AI Fluency as a Baseline

The definition of a highly qualified candidate has been irrevocably altered. Employers in 2026 are not universally seeking deep technical experts capable of training large language models (LLMs) from scratch; doing so requires millions of dollars in computing power and specialized data engineering. Instead, they are prioritizing "multidisciplinary technologists" and operational professionals who possess practical, everyday AI abilities paired with deep domain expertise. This paradigm shift is driven by the realization that the most successful organizations are not necessarily those deploying the most advanced proprietary models, but rather those that thoughtfully integrate off-the-shelf AI to enhance human decision-making, governance, and operational judgment.

The Transition from Automation Anxiety to Human-AI Collaboration

The narrative surrounding artificial intelligence in the workplace has matured significantly over the past few years. Early fears of widespread technological displacement and absolute automation anxiety have given way to a strategic focus on human-AI collaboration. Extensive data from the United Kingdom, for instance, indicates that 62% of business leaders are actively creating or planning to create new roles directly in response to AI adoption, with a further 22% reshaping existing roles. Many of these positions are mid-tier or entry-level and are explicitly focused on enabling teams to use AI safely and effectively.

These emerging roles bridge the gap between complex algorithmic outputs and practical business applications. Responsibilities often include managing AI tools, troubleshooting workflows, spotting errors, and helping non-technical teams extract real value from the technology in-house. Consequently, hiring managers are actively seeking candidates who demonstrate strong cognitive soft skills enhanced by AI literacy. These include critical thinking for evaluating outputs, creative ideation, structured communication, and emotional intelligence—traits that machines cannot replicate.

The U.S. Department of Labor's 2026 AI Literacy Framework codifies this shift, emphasizing that career readiness in the AI era is fundamentally about human judgment. The framework outlines foundational content areas such as understanding AI principles, evaluating outputs, and responsible AI use. Students and professionals alike must know when to rely on algorithmic output, when to challenge it, and how to inject distinctly human context into machine-generated drafts. AI literacy, therefore, transforms from a technical luxury into a baseline professional obligation.

The Rise of Verified Skill Credentials and the Demise of the Static Resume

In response to the proliferation of embellished AI skills on traditional resumes, professional networks and applicant tracking systems have implemented stringent verification mechanisms. In January 2026, LinkedIn launched the Verified AI Skills program, a critical initiative designed to address the challenge of recruiters having to manually verify candidate claims of AI proficiency. Instead of manually claiming expertise, candidates can now rely on automated validation. LinkedIn partnered with third-party AI tool providers to automatically validate and display a user’s proficiency directly in their certification section.

This ecosystem is explicitly designed to benefit non-technical candidates who build applications and workflows. The program launched with partners including Lovable, Replit, Relay.app, and Descript, and quickly expanded to include support for Gamma and Zapier. Users can seamlessly connect their workspace from a tool like Lovable directly to LinkedIn via an API. The resulting proficiency score, which evolves to reflect growing complexity, feature breadth, and depth of use, is then listed on the user's profile, providing undeniable cryptographic proof of their abilities.

Because non-coders typically do not utilize GitHub to store repositories of code, these alternative platforms and validation formats have emerged as the absolute standard for hosting the "Prompt Playbook." Recruiters no longer want to read that a candidate "knows ChatGPT"; they want to see a validated portfolio that demonstrates exactly how the candidate solved a specific business problem, what failed during the process, and what was learned during the iteration.

Architecting the "Prompt Playbook": Platform Selection and Strategy

A non-technical candidate's portfolio cannot rely on raw Python scripts, Jupyter notebooks, or complex development environments. Instead, it must utilize accessible, highly visual, no-code platforms to host a "Prompt Playbook"—a curated, organized library of prompt templates, operational workflows, and detailed case studies demonstrating practical business impact.

The architecture of these playbooks must signal to hiring managers that the candidate understands systems thinking rather than isolated task execution. A portfolio should never be a chaotic gallery of random AI-generated images or generic text outputs. It must be structured as a "decision trail" that illustrates how ambiguous organizational goals are transformed into structured briefs, how execution is planned, and how success is ultimately measured.

Platform Selection: Moving Beyond the Static PDF Document

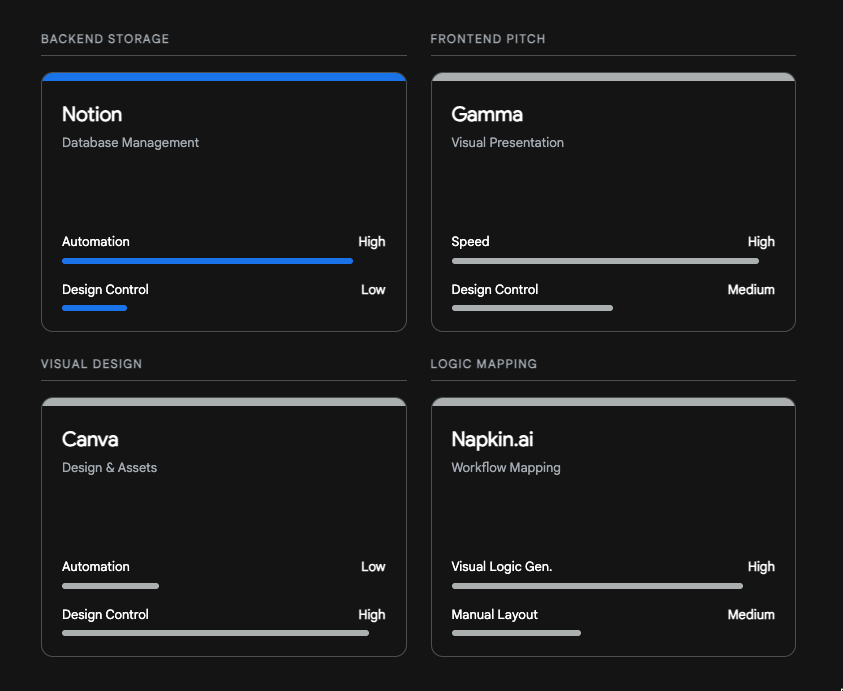

Hiring managers strongly prefer interactive, easily navigable environments that mimic internal company wikis, digital workspaces, or modern knowledge bases. Choosing the correct platform is the first critical decision a candidate makes, as it immediately signals their awareness of the modern digital operations stack. The most successful non-technical portfolios in 2026 leverage specific platforms tailored to different layers of the portfolio architecture.

| Platform Category | Leading Tools | Primary Portfolio Application | Evaluative Commentary |

|---|---|---|---|

| Relational Databases & Workspaces | Notion, Airtable | Hosting the core Prompt Library. Tracking metadata, categories, and iteration history. | Notion has become the undisputed gold standard for non-technical prompt portfolios. Candidates utilize its relational databases to build smart prompt libraries featuring properties like AI autofill, categorical tagging, and timestamped updates. Furthermore, the integration of Notion AI Agents allows candidates to demonstrate how a static prompt template can be transformed into an autonomous, scheduled workflow that runs hands-free. Airtable provides a similar, robust database layer suitable for complex internal tools, often praised for its data-first model and powerful workflow automations. |

| Rapid Presentation & Ideation | Gamma, Twistly | Showcasing specific case studies and converting raw data into executive summaries. | For candidates needing to present a polished case study quickly, Gamma offers an AI-native alternative to traditional slide decks. It focuses on rapid deck building from short prompts, utilizing modern, minimalist card systems. Hiring managers appreciate Gamma links for their clean, easily digestible presentation of a candidate's thought process, prioritizing speed and narrative structure over pixel-perfect manipulation. Twistly operates similarly, turning research or long documents into presentation-ready slides instantly. |

| Visual Design & Asset Creation | Canva | Demonstrating final output formatting, brand adherence, and asset polish. | Canva remains dominant for design-first users. While Gamma excels at rapid structural layout, Canva's massive template library and nuanced editing controls allow marketing candidates to prove they can take an AI-generated conceptual draft and manually refine it to match strict corporate brand guidelines. It requires more manual effort but rewards the user with total visual control, making it ideal for the final "artifact" display. |

| Workflow Automation & Integration | Zapier, Make, n8n, LangFlow | Demonstrating the ability to connect prompts to live business systems. | High-tier non-technical portfolios showcase actual automations. Zapier is the classic, user-friendly choice for rapid workflow building, while Make offers highly visual mapping tools for more complex logic. For true AI-centric automation, platforms like LangFlow provide visual interfaces for prototyping agent logic, demonstrating advanced systems thinking. |

| Visual Concept Mapping | Napkin.ai | Illustrating the mental models behind complex prompt chains and workflows. | Napkin.ai has emerged as a critical portfolio addition in 2026. It utilizes machine learning to convert text descriptions into professional wireframes and workflow diagrams instantly. Candidates use this to visually map their prompt iteration process, showing how an initial input transforms through various constraints into a final output, replacing dense text explanations with clear visual logic. |

Optimal Platform Architecture for Non-Technical AI Portfolios

Notion serves as the foundational database for prompt libraries, while tools like Gamma and Napkin.ai are leveraged for presenting case studies and mapping cognitive workflows.

Structuring the Playbook: Taxonomies and Advanced Frameworks

When building the playbook in a tool like Notion, candidates must demonstrate a profound understanding of prompt taxonomy. The most sophisticated portfolios eschew basic one-line requests—such as "Write a blog post about our new product"—in favor of highly structured architectural frameworks. Data from AI-leading marketing teams reveals that 80% of prompts in typical organizations are rudimentary one-liners, resulting in generic, interchangeable content that leads management to falsely conclude that "AI doesn't work for us". Conversely, successful candidates prove they operate differently.

One foundational structure widely showcased in 2026 portfolios is the RCT Framework (Role, Context, Task):

- Role: Establishing the precise persona and expertise level of the AI. Rather than leaving the AI's identity blank, a candidate specifies: "You are a senior copywriter with 15 years of B2B SaaS experience specializing in mid-market automation".

- Context: Providing the necessary background constraints. The AI is fed specific target audience demographics, desired tone (e.g., "Professional but not stiff, data-driven"), and explicit negative constraints such as avoiding specific corporate jargon.

- Task: Defining the exact deliverable, formatting requirements, and structural limitations. Instead of "write a post," the task becomes "Create 5 LinkedIn post variants, max 200 words each, utilizing specific hook formats (Story, Statistic, Question), ending with a direct CTA".

By meticulously documenting prompts within these rigid, predictable frameworks, candidates prove to hiring managers that their outputs are deterministic and repeatable, rather than the result of lucky, unstructured conversational interactions.

Furthermore, high-level operators utilize the KERNEL Framework to ensure token efficiency and systemic reliability. KERNEL, developed from the analysis of thousands of enterprise prompts, stands for:

- Keep it simple: Abandoning massive walls of text in favor of one clear goal. Replacing a 500-word rambling context drop with "Write a technical tutorial on Redis caching" results in 70% less token usage and 3x faster responses.

- Easy to verify: Replacing subjective instructions like "make it engaging" with verifiable criteria like "include exactly three industry-specific case studies." Testing shows an 85% success rate with clear criteria versus 41% without.

- Reproducible results: Removing temporal references ("latest trends") to ensure the prompt performs consistently week over week.

- Narrow scope: Enforcing the rule of one prompt equals one goal. Splitting complex tasks into a sequential chain produces an 89% satisfaction rate compared to multi-goal failures.

- Explicit constraints: Dictating exactly what the AI must not do, which reduces unwanted, hallucinated outputs by up to 91%.

- Logical structure: Formatting the prompt into discrete blocks (Context, Task, Constraints, Format) for absolute clarity.

When a hiring manager reviews a Notion database and sees every prompt neatly categorized under KERNEL or RCT parameters, it immediately signals that the candidate views AI not as a magic text generator, but as a deterministic software system requiring precise engineering.

Documenting the Human-in-the-Loop (HITL) Iteration Process

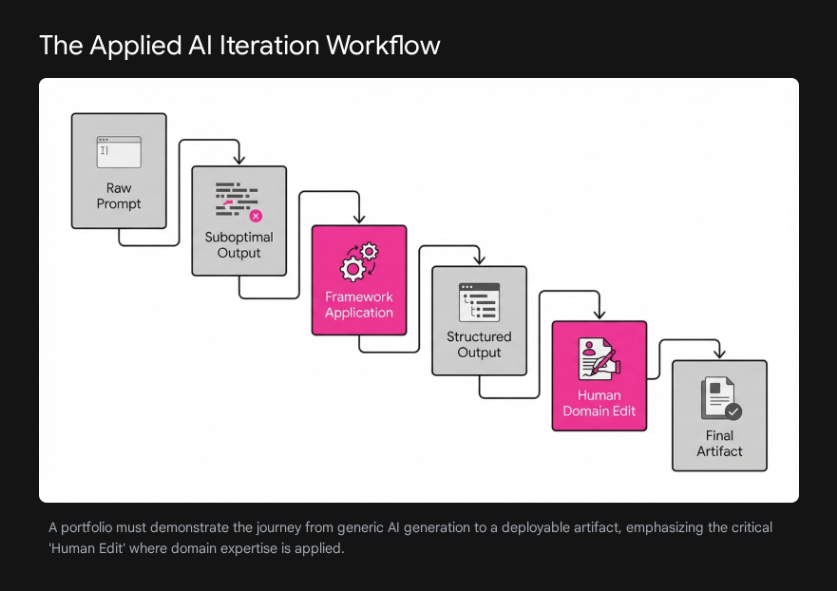

A persistent and fatal flaw in early AI portfolios was the "gallery approach," where candidates merely displayed the final, polished output of an AI generation, stripping away the context of its creation. Hiring managers in 2026 universally dismiss this format. They are acutely aware that raw AI output is often generic, prone to factual hallucination, structurally predictable, and misaligned with specific corporate nuances. A portfolio is not a gallery; it is a decision trail.

The modern portfolio must visualize the "Human Edit"—the critical intervention where a professional applies domain expertise to correct, refine, limit, and elevate the machine's output. This human-in-the-loop (HITL) methodology proves that the candidate is a skilled operator who governs the AI, providing oversight, judgment, and context, rather than acting as a passive recipient of its data.

Frameworks for Documenting Iterative Problem Solving

To effectively showcase this iterative process, candidates utilize established prompt engineering methodologies to structure their case studies. The SHRM Framework (Specify, Hypothesize, Refine, Measure), originally popularized in human resources contexts, is widely adopted across operations and administrative portfolios to demonstrate rigorous iterative problem-solving.

The SHRM framework requires the candidate to explicitly document their cognitive process:

- Specify: The candidate documents the initial, highly specific goal and context provided to the model. For example, an HR professional might specify: "Write a 100‑word overview to help HR business partners explain the performance management process to the finance department".

- Hypothesize: The portfolio details the candidate's anticipation of how the AI might fail. This proves foresight. The candidate notes, "I hypothesized the model would use overly technical HR jargon that the finance team would ignore".

- Refine: This is the crux of the HITL proof. The candidate shows the exact adjustments made to the prompt or the manual edits applied to the text based on the initial output. They document adding the constraint: "Use plain English; mention high-performing teams specifically".

- Measure: The candidate establishes quantitative benchmarks for success, scoring the output on clarity, coherence, and brevity, demonstrating a rigorous approach to evaluating qualitative outputs.

Consider a comprehensive case study documenting the creation of an "Employee Development Plan." A strong portfolio would show the raw inputs provided to the AI (the employee's title, strengths, weaknesses, budget, and timeframes). The candidate would then display the AI's initial draft, pointing out where the AI suggested a generic online course. The portfolio then highlights the "Human Edit," showing how the candidate manually replaced the generic course with a specific internal mentorship program and aligned the timeline with the company's specific quarterly goals. This demonstrates that the candidate uses AI for heavy lifting but relies on human intelligence for strategic alignment.

Visualizing the Iteration Process

Successful portfolios utilize a side-by-side layout or a linear timeline to walk the hiring manager through the lifecycle of a prompt. Tools like Napkin.ai are frequently deployed here to generate clear visual maps of the candidate's cognitive workflow, transforming dense paragraphs of explanation into highly digestible diagrams.

The narrative flow of a standard, highly-rated portfolio case study follows a strict, five-part sequence:

- The Business Problem: A clear statement of the operational bottleneck. (e.g., "The sales team needed a faster way to summarize weekly client feedback calls to identify churn risks.")

- The "Bad" AI Output (Before): The candidate shows the result of a generic, zero-shot prompt, explicitly highlighting the flaws. This might include hallucinated data points, an overly enthusiastic tone inappropriate for churn analysis, or unusable formatting.

- The Refined Prompt Engineering: The candidate displays the application of the RCT or KERNEL framework, introducing constraints, few-shot examples, and strict formatting rules.

- The Human Edit (The Value Add): The candidate explicitly highlights what they manually changed in the final AI output. This might involve injecting personal domain knowledge, correcting a logical fallacy, or aligning the prose with the company's subtle cultural nuances. They show exactly where human prose, drawing on cultural nuances and emotional depth, overrides the AI's predictable probabilistic models.

- The Final Business Artifact: The deployable asset is presented, accompanied by metrics showing the specific time saved or efficiency gained by utilizing the hybrid approach.

The "Tail Generation" Proof: Guaranteeing Deployable Artifacts

The "Tail Generation" Proof: Guaranteeing Deployable Artifacts

For operations, marketing, and administrative professionals, the ultimate proof of AI literacy is the ability to generate outputs that require zero structural or formatting adjustments before deployment. Hiring managers are fundamentally looking for candidates who can save them time; an AI output that generates excellent content but requires thirty minutes of manual reformatting in Excel or a CMS completely negates the efficiency of the tool.

To achieve absolute structural control, expert non-technical prompters utilize specific syntax patterns. The most vital of these documented in 2026 portfolios is the Tail Generation Pattern.

Mechanics of the Tail Generation Pattern

Large Language Models function primarily by predicting the next optimal token based on the preceding context. Therefore, the placement of instructions within a prompt drastically impacts the final output. When providing a model with a long document to analyze or a complex set of instructions, the model can sometimes "forget" the formatting constraints placed at the beginning of the prompt by the time it begins generating the response.

The Tail Generation Pattern solves this by dictating that the most critical formatting constraints, output structures, or follow-up instructions must be placed at the absolute end (the "tail") of the prompt, or next to last.

The structural syntax generally follows this formula:

- "At the end of your response, repeat and ask me for [X]."

By placing the rule at the end, the LLM is forced to immediately comply with the constraint as its final cognitive action, reinforcing the prompt structure and preventing stylistic drift.

Creating Machine-Readable and Deployable Artifacts

Non-technical candidates use this pattern to guarantee that outputs are immediately useful for business operations. Instead of accepting conversational text, candidates enforce strict Output Contracts. They design prompts that forbid the AI from including conversational filler (e.g., "Certainly! Here is your requested data:").

Example 1: The CSV Data Extraction for Operations An operations manager might need to extract vendor information from a dense, unstructured PDF contract. A standard prompt might yield a bulleted list that must be manually copied and pasted into a spreadsheet. By employing the Tail Generation Pattern and a strict output contract, the candidate forces a structured format ready for immediate database import.

- Prompt Body: "Extract all vendor names, contract dates, and renewal fees from the provided text."

- Tail Generation (The Contract): "Output this data strictly as a valid CSV format with the headers: Vendor, Date, Fee. Do not include any conversational filler before or after the CSV data. End the response by asking 'Would you like to analyze another contract?'".

Example 2: JSON Formatting for Marketing Ops Marketing operations professionals frequently need data structured for immediate programmatic import into CRMs, automation platforms, or content management systems. While they may not be writing complex Python scripts, they understand how to command the AI to produce perfect JSON objects.

- Prompt Body: "Based on the provided transcripts, summarize the core features of the product launch."

- Tail Generation (The Contract): "Format the final campaign brief exclusively as a valid JSON object matching the following exact schema: { 'campaign_name': '', 'target_audience': '', 'key_message': '', 'risks': '' }. Do not output markdown code blocks. Ensure there are no trailing commas. Validate the JSON before completing the output.".

By dedicating a specific section of their portfolio to "Structured Output Generation," candidates definitively prove that their prompt engineering transcends basic chat interfaces. They demonstrate an ability to bridge the gap between unstructured natural language and rigid operational workflows, proving they can save entire departments hundreds of hours of manual data entry.

Algorithmic Discoverability: AI Governance and Keyword Optimization

In 2026, constructing a brilliant portfolio is only half the battle; ensuring it is seen by human eyes is equally critical. Roughly 75% of employers utilize Applicant Tracking Systems (ATS) to screen resumes and portfolios before any human review occurs. If a candidate possesses elite prompt engineering skills but fails to use the specific vocabulary programmed into the ATS by recruiters, their application will be automatically rejected, regardless of their actual qualifications. The terminology surrounding artificial intelligence has rapidly evolved from broad, generic hype words to specific, highly applied operational vocabulary.

The Shift from Generic Hype to Applied Governance Terminology

Previously, candidates might have populated their resumes with terms like "ChatGPT Expert," "Prompt Engineer," or "AI Enthusiast." In 2026, these are considered exceedingly weak signals. Hiring managers and ATS algorithms are meticulously calibrated to detect "AI-Hybrid Keywords"—terms that seamlessly blend traditional domain expertise with advanced AI governance, risk management, and operational execution.

This shift is largely driven by a massive transformation in the regulatory and compliance landscape. By 2026, the EU AI Act has taken full effect, imposing strict transparency requirements and heavy penalties for non-compliance. Similarly, in the United States, the Colorado AI Act and the NAIC Model Bulletin require documented governance, bias controls, and audit-ready decision logs for AI systems. Corporate boards have recognized that 80% of AI projects fail due to inadequate infrastructure and poor governance. Consequently, they are desperate for non-technical staff who can safely manage AI risk on the front lines.

The most potent keywords that non-technical candidates must integrate into their portfolios fall into several distinct categories:

| Keyword Category | High-Impact Terminology | Contextual Definition & Application |

|---|---|---|

| Governance & Oversight | AI Output Auditing, Human-in-the-Loop (HITL), Hallucination Detection, Bias Testing | Demonstrates the candidate's ability to systematically review AI-generated content to detect factual errors, logical fallacies, or brand deviations before they pose a regulatory risk. |

| Workflow & Architecture | Prompt Optimization, AI-Assisted Workflows, RAG Knowledge Base, Zero-Shot/Few-Shot Prompting | Indicates an understanding of systems-level thinking. Candidates show they can chain prompts together, reduce token usage, and ground AI outputs in specific, private company data (RAG) rather than public models. |

| Data Structuring | Output Contracts, Valid JSON, CSV Schema Adherence, Machine-Readable Artifacts | Proves the candidate can generate structured data that requires no manual reformatting, bridging the gap between natural language and database ingestion. |

| Impact & Measurement | Accuracy Rate, Consensus Score, Tasks Per Hour, Time-to-Deployment | ATS systems score resumes significantly higher when AI skills are paired with hard quantitative impact metrics, proving reliability and velocity gains. |

The strongest portfolios and resumes do not simply list these keywords in a disparate "Skills" section at the bottom of a page. Instead, they weave them seamlessly into achievement bullets and case study descriptions. For example, rather than stating a weak claim like "Used AI to help with marketing copy," a hyper-optimized, ATS-friendly bullet reads:

"Engineered a marketing prompt library using few-shot techniques to automate B2B campaign briefs, increasing output throughput by 40% while maintaining strict AI output auditing protocols to ensure zero brand hallucinations or compliance violations.".

By utilizing this specific nomenclature, candidates signal to both the algorithmic gatekeepers and the human hiring managers that they understand the realities, risks, and systemic requirements of enterprise AI deployment.

The Definitive Blueprint: Building the Non-Coder's AI Portfolio

Synthesizing the research data, prevailing hiring manager preferences, and the technological capabilities expected in 2026, the following is a comprehensive, step-by-step blueprint for non-technical candidates to construct a compelling, interview-generating AI portfolio.

Step 1: Establish the Platform Stack

Candidates must avoid attempting to build custom websites from scratch if it detracts from the quality of the content. Utilizing the established no-code stack signals organizational competence and tool familiarity.

- The Backend (Notion): Create a dedicated Notion workspace. Utilize a structured "Prompt Library Organizer" template. Set up a relational database with specific columns for: Prompt Title, Operational Category (e.g., HR, Ops, Marketing), Inputs Required from the User, The Raw Prompt Structure (using RCT or KERNEL), and Expected Output Format.

- The Frontend (Gamma or Canva): When applying to specific roles, select two or three highly relevant case studies from the Notion database. Export this logic into Gamma to generate a fast, clean, easily navigable presentation deck. Alternatively, use Canva to meticulously design a polished PDF summary that strictly adheres to professional design standards.

- The Visualizer (Napkin.ai): For the most complex workflow presented, utilize Napkin.ai to generate a schematic flowchart. This chart must visually map how data moves from the initial ambiguous input, through the AI constraints, into the critical human edit phase, and finally to the deployable artifact. Embed this image directly into the Notion database or Gamma presentation.

Step 2: Implement the "Prompt Case Study" Template

Every entry in the portfolio must follow a strict, auditable narrative structure that highlights the human value-add. Adopt elements of the KERNEL framework and structure each case study exactly as follows :

- 1. The Operational Bottleneck: Clearly define the business problem being solved (e.g., "The HR department spent 4 hours weekly synthesizing exit interviews, leading to delayed retention strategies.").

- 2. The Prompt Architecture (The Input): Display the prompt using a block-style layout separating the components.

- Role: "Act as a Senior HR Data Analyst."

- Context: "Analyze the following exit interview transcripts. Maintain strict confidentiality."

- Task: "Identify the top three recurring reasons for departure."

- 3. The Output Contract (The Tail Generation): Show exactly how deployability was forced.

- "Format the output strictly as a JSON object with keys for 'primary_reason', 'frequency', and 'suggested_action'. Do not include markdown formatting or conversational text.".

- 4. The AI Output Auditing (The Human Edit): Place the AI's raw output side-by-side with the final edited version.

- Highlight: "The AI incorrectly categorized 'schedule issues' and 'long hours' as separate issues. I manually audited the data, merged them into a broader 'Work-Life Balance' category, and adjusted the tone for executive review.".

- 5. The Business Impact: Conclude with hard metrics. "Reduced weekly synthesis time from 4 hours to 15 minutes, maintaining a 100% adherence to internal reporting schemas and eliminating data entry errors.".

Step 3: The AI-Hybrid Keyword Integration Checklist

Before publishing the portfolio or submitting the accompanying resume to an ATS portal, candidates must ensure the presence of the exact terminology expected by 2026 algorithms and technical recruiters.

2026 ATS Portfolio Verification Checklist

| Category | Required Terminology | Contextual Usage Example |

|---|---|---|

| Governance & Oversight | ✓Risk Management System✓Data Governance✓Bias Testing✓Automated Logging and Monitoring✓Human Oversight Mechanisms✓AI Output Auditing✓Hallucination Detection&Fact-Checking | "Implement Risk Management Systems, enhance data governance with bias testing, and build automated logging and monitoring. Develop human oversight mechanisms and perform AI Output Auditing with Hallucination Detection and Fact-Checking." |

| Prompt Architecture & Output | ✓Output Contract✓Success Criteria&Constraints✓Prompt Engineering✓RLHF✓Semantic Segmentation✓Entity Extraction (NER) | "Define the Output Contract format and length. Establish Success Criteria and detail rules with Constraints. Use Prompt Engineering and RLHF for model training, alongside Semantic Segmentation and Entity Extraction (NER)." |

| Measurement & Impact | ✓Customer Churn Minimization✓Multidisciplinary Technologists✓Accuracy Rate✓Consensus Score&Tasks Per Hour✓IoU (Intersection over Union)✓Pixel-Perfect Accuracy | "Demonstrate business problem solving like customer churn minimization as multidisciplinary technologists. Track key metrics including Accuracy Rate, Consensus Score, and Tasks Per Hour, while measuring IoU (Intersection over Union) to ensure Pixel-Perfect Accuracy." |

Ensure these specific AI-Hybrid terms are naturally integrated into project descriptions and resume bullets to guarantee algorithmic discoverability by 2026 Applicant Tracking Systems.

Conclusion

The professional landscape of 2026 has firmly established that artificial intelligence is not an autonomous replacement for human judgment, but rather a powerful, highly scalable amplifier of it. For marketing, operations, human resources, and administrative professionals, the ability to interface effectively with AI systems is no longer an optional curiosity; it is a fundamental professional obligation required to remain competitive in a tight labor market.

To successfully navigate this environment and definitively prove their value to hiring managers, non-technical candidates must discard the outdated notion of simply listing software tools on a static resume. They must adopt the mindset of an operational architect and a rigorous auditor. By carefully structuring Prompt Playbooks in accessible databases like Notion, meticulously documenting the human-in-the-loop iteration cycle through established analytical frameworks like SHRM and KERNEL, leveraging advanced structural techniques like Tail Generation to guarantee immediately deployable business artifacts, and optimizing their professional narrative with precise AI governance vocabulary, candidates transition from being passive users of technology to highly sought-after strategic assets. The ultimate non-coder's AI portfolio is not merely a showcase of what the artificial intelligence can generate; it is an undeniable, validated proof of what the human professional can achieve by actively managing, constraining, and directing it.