Introduction: The Paradigm Shift in Entry-Level Recruitment

The macroeconomic landscape of entry-level recruitment has undergone a seismic and irrevocable transformation by the year 2026. The traditional heuristic for evaluating recent university graduates—historically reliant upon the prestige of academic institutions, cumulative grade point averages, and static, standardized degree titles—has been rendered largely obsolete by the ubiquitous proliferation of generative artificial intelligence and autonomous agentic workflows. As multinational corporations, boutique agencies, and enterprise organizations seamlessly integrate large language models (LLMs) and multi-agent systems into their foundational operations, the market premium placed on standard, repetitive cognitive labor has precipitously diminished. In its place, the labor market now disproportionately rewards a modernized competency known as "AI Literacy"—the sophisticated ability to leverage, guide, verify, and strategically refine artificial intelligence outputs to generate exponential business value.

For graduates holding non-technical degrees, such as a Bachelor of Arts in Communications, Marketing, Sociology, or the Humanities, this technological shift presents a unique and highly complex paradox. On one hand, the rote, repetitive tasks traditionally assigned to junior employees and recent graduates—such as drafting introductory emails, summarizing market research, compiling preliminary analytics reports, and formatting data—are now entirely automated by algorithmic systems. On the other hand, the corporate demand for professionals who possess the critical thinking, ethical grounding, and contextual domain understanding necessary to manage, oversee, and correct these automated systems has skyrocketed. The World Economic Forum’s earlier projections regarding the displacement of 85 million routine jobs and the simultaneous creation of 97 million new, technology-augmented roles are actively materializing, with these newly minted positions heavily favoring AI-augmented workers over AI-resistant ones.

This comprehensive research report provides an exhaustive, evidence-based investigation into how non-technical graduates can successfully reframe a traditional "Standard Degree" into a highly coveted "AI-First" professional profile without requiring a background in computer science or software engineering. By systematically analyzing 2026 algorithmic recruitment protocols, evolving corporate compliance frameworks, the psychological shifts within human resources departments, and the structural anatomy of digital professional branding, this analysis constructs a definitive blueprint for career entry. The subsequent sections detail the essential terminology, behavioral interview frameworks, social proof mechanisms, and resume optimization strategies explicitly required to navigate and conquer the AI-driven hiring processes of the modern enterprise.

Section 1: The Anatomy of "AI Literacy" in the 2026 Enterprise

To effectively navigate how modern recruiters evaluate candidates, it is imperative to first deconstruct what "AI Literacy" actually signifies within a stringent corporate context. A pervasive misconception among recent graduates is the belief that AI literacy necessitates a formal background in computer science, Python coding, or machine learning systems architecture. The reality of the 2026 labor market dictates that for the non-technical professional, AI literacy is defined as the operational mastery of artificial intelligence as an augmentation tool, coupled with a deep, uncompromising understanding of its limitations, ethical implications, and security vulnerabilities.

The End of the "Prompt Engineer" Era

In the early developmental stages of generative AI adoption between 2023 and 2024, "Prompt Engineering" was heralded as the defining, lucrative skill of the future. However, by 2026, the novelty of basic prompting has entirely evaporated from the professional landscape. Modern foundational models possess vastly superior intent-recognition capabilities, meaning that the mere ability to type a detailed instruction into a chat interface is no longer a distinct competitive advantage. What enterprise organizations demand instead is a synthesis of "Workflow Orchestration" and strict "Quality Assurance." The core operational problem facing modern enterprises is no longer the generation of text, code, or data; rather, the challenge lies in ensuring that the synthetically generated output is factually accurate, perfectly aligned with proprietary brand guidelines, completely free from systemic bias, and compliant with increasingly stringent global legal standards.

The Impact of the 2026 Regulatory Environment

The contemporary regulatory environment heavily dictates the parameters of non-technical hiring. With the impending full enforcement of comprehensive regulatory frameworks such as the European Union's AI Act—which mandates AI literacy provisions and strict governance for high-risk applications—companies face severe financial and reputational penalties for organizational negligence. A primary concern for executive teams is the proliferation of "Shadow AI," which refers to the unauthorized, unmonitored, or unverified use of artificial intelligence tools by employees attempting to increase personal productivity. Furthermore, organizations are now legally and ethically required to maintain transparent, auditable records of human oversight for high-risk or client-facing AI applications.

Consequently, human resource departments and automated screening algorithms are actively searching for candidates who explicitly demonstrate an understanding of AI governance and risk management. A candidate who boasts during an interview that they utilize AI for every task to work ten times faster will immediately trigger a compliance red flag, signaling a high-risk employee. Conversely, a candidate who clearly articulates that they utilize approved large language models for initial ideation and data structuring, but rigorously apply a human-in-the-loop verification protocol to ensure zero hallucinations and absolute data privacy compliance, demonstrates the profound AI literacy that modern enterprises desperately require.

Section 2: The Lexicon of Augmentation: Ten Essential "AI-Hybrid" Keywords

Applicant Tracking Systems (ATS) and human recruiters alike utilize algorithmic scanning software to parse resumes for specific terminologies that indicate modern technological competence. By 2026, the most lucrative entry-level roles—even those existing entirely outside of the software engineering domain—explicitly require "AI-hybrid" capabilities. Graduates must consciously discard outdated action verbs and integrate the precise vocabulary of the augmented workforce to pass initial screening algorithms. The strategic inclusion of these keywords signals to the employer that the candidate views technology as an infrastructure for scalability rather than a mere novelty.

The analysis identifies the ten most critical "AI-Hybrid" keywords appearing in non-coding job descriptions. These competencies can be broadly categorized into three distinct operational pillars: Risk Mitigation, Workflow Architecture, and Applied Execution.

Pillar 1: Risk Mitigation and Quality Control

The highest premium in the non-technical AI job market is placed on candidates who protect the organization from algorithmic failures. As systems scale, the potential for automated errors scales proportionally, requiring rigorous human oversight.

1. AI Output Verification The systematic process of reviewing, fact-checking, and structurally correcting content, code, or analytical data generated by an artificial intelligence model constitutes AI Output Verification. Large language models are notoriously prone to generating logical inconsistencies or fabricating facts entirely. Organizations across industries are desperate for human professionals to act as operational "guardrails" to prevent these automated errors from reaching public platforms, client deliverables, or executive dashboards. A communications graduate, for instance, can claim this highly sought-after competency by detailing how they consistently audited AI-generated marketing copy against strict brand guidelines and factual product specifications, establishing a formal "confidence scoring" protocol prior to public distribution.

2. Hallucination Mitigation Hallucination mitigation involves the active implementation of strategic workflows designed to prevent, identify, and swiftly correct false information generated with high confidence by an AI system. A single AI hallucination embedded within a corporate financial report or a public-facing customer service interaction can trigger immense reputational damage, customer churn, and severe legal liability. Resumes that highlight specific, quantifiable instances where the candidate caught and neutralized AI errors demonstrate immense value. Candidates should document their ability to implement cross-referencing protocols that reduce factual inaccuracies in automated preliminary research.

3. Bias Detection and AI Oversight This competency requires continuous monitoring of AI outputs to ensure they do not inadvertently propagate historical societal biases, discriminatory language, or exclusionary practices. As organizations increasingly deploy algorithmic systems within human resources, targeted marketing, and financial lending, the operational risk of algorithmic bias represents a critical threat. Non-technical candidates possessing academic backgrounds in the humanities, sociology, ethics, or mass communications are uniquely and perfectly positioned to serve as ethical auditors for these systems. Showcasing academic thesis work or practical internship experience where synthetic content was systematically reviewed for inclusive language and demographic neutrality serves as an excellent demonstration of this skill.

Pillar 2: Workflow Architecture and System Scalability

The second pillar focuses on the ability to design systems that allow teams to work more efficiently, moving beyond individual productivity to organizational scalability.

4. Prompt Library Management Prompt Library Management involves the creation, hierarchical organization, continuous optimization, and strict version control of highly effective AI prompts utilized across an entire team or department. Rather than permitting every individual employee to independently reinvent effective interactions, mature companies maintain centralized, curated repositories of proven instructional prompts. Managing this complex library ensures absolute consistency in corporate messaging and dramatically accelerates operational efficiency. A recent graduate can legitimately claim this skill by demonstrating how they categorized and maintained a suite of highly specific, constraint-bound prompts for a university organization or internship program, effectively reducing the task initiation time for their peers.

5. Agentic Workflow Optimization Agentic Workflow Optimization signifies the ability to strategically string together multiple specialized AI tools or autonomous "agents" to fully automate a complex, multi-step business process. The 2026 enterprise standard has evolved far beyond simple question-and-answer chatbots toward "Software 3.0"—autonomous agents working in synchronized sequences to achieve overarching goals. Non-coders who possess the systems-thinking required to map out these logic flows using no-code or low-code integration platforms are highly sought after. A candidate demonstrates this by detailing how they designed a multi-tool pipeline: deploying an AI web-scraper to aggregate competitive data, routing that data into an LLM for thematic summarization, and utilizing an automated formatting tool to generate a final presentation deck.

6. Contextual Grounding (RAG Implementation Strategy) Retrieval-Augmented Generation (RAG) involves supplying an AI model with highly specific, proprietary corporate documents so that it answers queries based exclusively on that vetted data, rather than relying on its generalized, publicly scraped training data. While software engineers are responsible for building the technical RAG architecture, non-technical staff are urgently needed to curate, clean, semantically tag, and structure the institutional knowledge base documents that feed these systems. Candidates should highlight their ability to curate structured databases of organizational knowledge formatted specifically to be ingested by an AI tool, thereby ensuring high accuracy and mitigating hallucination risks.

Pillar 3: Applied Execution and Ecosystem Fluency

The final pillar focuses on the daily application of artificial intelligence tools to execute business strategies effectively and securely.

7. Human-in-the-Loop (HITL) Validation HITL Validation is the practice of designing and participating in workflows where an artificial intelligence system performs the heavy computational or generative lifting, but a qualified human professional must review, refine, or explicitly approve the output before it is finalized, executed, or deployed. This practice is legally and ethically mandated in numerous high-stakes industries to prevent catastrophic automated errors. It represents the ultimate, highly desired synthesis of machine scale and human judgment. Candidates must frame their AI-assisted projects explicitly as "HITL workflows," demonstrating how machine efficiency was ultimately governed by human accountability.

8. Synthetic Data Utilization The utilization of synthetic data involves using generative AI to create artificial, mathematically representative, yet entirely privacy-compliant datasets. These datasets are used to simulate market scenarios, stress-test business strategies, or train subsequent models without ever exposing sensitive, real-world customer data to security breaches. With international data privacy laws becoming increasingly punitive, organizations rely heavily on synthetic data for safe strategic testing. A marketing graduate, for example, might detail how they utilized synthetic customer personas generated by an LLM to simulate a focus group or perform a rigorous mock A/B test before committing actual financial resources to an advertising campaign.

9. Conversational AI Optimization This competency requires training, refining, and scripting the underlying dialogue logic for customer-facing chatbots or internal AI assistants to ensure they sound natural, empathetic, and resolve user intent efficiently. Corporations widely utilize tools like Paradox for customer service inquiries and initial recruitment screening. These systems require professionals who deeply understand linguistics, human empathy, and conversational mapping to program the interaction flows. Experience designing complex dialogue trees, establishing strict "brand voice" constraints for custom GPT assistants, or analyzing historical chatbot failure logs to improve future automated responses demonstrates profound value.

10. AI Ecosystem Fluency AI Ecosystem Fluency is a demonstrated, agile ability to rapidly adapt to newly released AI tools, while understanding the comparative architectural advantages of different models for specific tasks. The artificial intelligence landscape evolves on a monthly basis. Employers actively avoid candidates who only know how to operate a single, specific tool; instead, they seek professionals who understand the fundamental concepts of algorithmic augmentation and can learn new platforms instantaneously. Listing a diverse technology stack of various AI tools used to achieve differing outcomes demonstrates a highly desirable, tool-agnostic, and problem-first approach to modern work.

Summary of AI-Hybrid Keywords for the Non-Technical Graduate

| Keyword Competency | Core Enterprise Value | Application Example for Non-Technical Roles |

|---|---|---|

| AI Output Verification | Ensures factual accuracy and prevents dissemination of false data. | Auditing AI-generated press releases against product specification sheets. |

| Hallucination Mitigation | Protects brand reputation and reduces legal liability from AI fabrications. | Implementing cross-referencing protocols for automated market research. |

| Bias Detection & Oversight | Ensures demographic neutrality and adherence to ethical standards. | Reviewing automated outreach campaigns for inclusive, neutral language. |

| Prompt Library Management | Drives team-wide consistency and drastically reduces task initiation time. | Curating a version-controlled database of optimized marketing prompts. |

| Agentic Workflow Optimization | Automates complex, multi-step processes via connected AI tools. | Designing a Zapier pipeline connecting a data scraper to an LLM summarizer. |

| Contextual Grounding | Prepares clean, structured proprietary data to feed enterprise AI systems. | Tagging and formatting internal HR policy documents for chatbot ingestion. |

| Human-in-the-Loop Validation | Synthesizes machine speed with mandatory human accountability. | Reviewing and approving AI-drafted client communications before sending. |

| Synthetic Data Utilization | Enables safe strategy testing without violating data privacy regulations. | Generating artificial consumer personas to simulate campaign reactions. |

| Conversational AI Optimization | Improves user experience and resolution rates in automated interactions. | Scripting empathetic dialogue trees and brand voice constraints for chatbots. |

| AI Ecosystem Fluency | Ensures organizational agility as new technological tools emerge. | Utilizing distinct, specialized tools for text, data analysis, and image generation. |

Section 3: The "STAR-AI" Behavioral Storytelling Framework

Successfully navigating the applicant tracking system represents only the preliminary hurdle in the 2026 job market. During subsequent behavioral interviews, candidates must eloquently and persuasively articulate their operational value to human hiring managers and automated evaluators alike. For decades, the traditional STAR method—an acronym standing for Situation, Task, Action, and Result—has been the gold standard for behavioral interviewing. However, in an augmented labor market, the traditional STAR framework fails to capture the necessary nuances of modern work. If a candidate utilizing the traditional method simply states, "I used a generative AI tool to write a comprehensive market report, and it saved me three hours of labor," the recruiter interprets this statement negatively. The underlying message received is, "I outsourced my cognitive responsibilities to a machine, and I am therefore entirely replaceable by that same machine."

To effectively counteract this dangerous perception, the 2026 interviewing standard has evolved into the STAR-AI Method: Situation, Task, AI-Tool used, Refinement made by the human, and Impact. This modernized framework actively centers the human professional's critical thinking, domain expertise, and quality assurance capabilities, positioning the artificial intelligence merely as an initial accelerant rather than the final producer.

Deconstructing the STAR-AI Framework

The methodology commences with the Situation (S) phase, wherein the candidate establishes the broader context and the professional stakes involved. This requires painting a vivid picture of the complex business environment, the specific academic challenge, or the systemic inefficiency that necessitated an intervention. Following the situation, the candidate defines the Task (T). This step involves articulating the specific operational objective, detailing precisely what needed to be delivered, and outlining the severe constraints involved, whether they pertained to abbreviated timelines, restricted budgets, or exceedingly high quality standards.

The narrative then progresses to the AI-Tool Used (A) phase. Crucially, candidates must explicitly name the specific technology ecosystem utilized and the strategic approach employed to deploy it. A candidate must never vaguely state that they "prompted an AI." Instead, they must discuss the underlying architecture of the prompt, the specific contextual data provided to ground the model, and the rationale for selecting one specific tool over another.

The most critical phase of the entire framework is the Refinement (R) step. This is the precise juncture where the candidate wins the interview and proves their indispensability. The candidate must detail the mandatory human intervention required to finalize the task. They must explain how the initial AI output was inherently flawed, overly generic, factually inconsistent, or misaligned with the strategic objective. The narrative must then pivot to showcase what specific domain expertise, ethical oversight, or factual verification the candidate applied to transform the raw, synthetic output into a finalized, high-value corporate asset. Finally, the framework concludes with the Impact (I). The candidate must quantify the final business outcome, deliberately combining the sheer efficiency and speed gained by utilizing the AI with the superior quality and accuracy ensured solely by the human refinement process.

Case Study: Analyzing a Flawed versus a Masterful Interview Response

To illustrate the stark difference between an easily replaceable candidate and an indispensable augmented professional, consider a scenario where a candidate is asked to describe a time they managed a highly complex, time-sensitive communications project.

The flawed response, characteristic of a candidate who outsources their value, typically sounds like this: "My team was unexpectedly tasked with creating a massive, multi-platform social media campaign for a new product launch in just three days. I immediately used a generative AI tool to generate fifty different tweets and LinkedIn posts based directly on our product brochure. I then copied and pasted them into our automated scheduling tool, which saved our entire department about a week of brainstorming and drafting time, and the campaign launched perfectly on schedule." This response is fatal in 2026. The candidate displays zero quality control, a complete lack of brand voice oversight, and extreme risk blindness. They have successfully proven that they are entirely replaceable by the very software they utilized.

Conversely, the masterful response employing the STAR-AI approach sounds entirely different: "We faced a severe constraint, tasked with launching a multi-channel digital campaign in just three days, a complex process that standardly requires two weeks of lead time. We required fifty unique, highly technical posts tailored to distinctly different buyer personas. To accelerate the foundational work, I engineered a dynamic prompt library utilizing a secure enterprise LLM. I fed the model our proprietary technical manuals and strict brand voice guidelines, instructing it to generate a baseline matrix of content. As I anticipated, the initial AI drafts were structurally sound but lacked empathetic resonance and actually hallucinated a software feature we had not yet released. I immediately implemented a rigorous AI output verification pass. I spent my cognitive energy rewriting the narrative hooks for genuine human empathy, meticulously correcting the technical inaccuracies, and ensuring the tone aligned perfectly with our corporate identity. By utilizing the AI strictly for the structural heavy lifting and focusing my energy on strategic refinement, I reduced our content production time by seventy percent, caught absolutely all technical errors prior to publication, and the resulting campaign saw a forty percent increase in targeted user engagement compared to our previous, non-augmented campaigns.".

This candidate unequivocally proves that they deeply understand the tool's severe limitations. They successfully highlight their unique human domain expertise—encompassing empathy, brand voice alignment, and factual accuracy—as the strictly indispensable element that guaranteed the project's success.

Evolution of Behavioral Storytelling: STAR vs. STAR-AI | Phase | Traditional STAR | Modern STAR-AI | Focus Shift |

| ------------------------- | --------------------------------------------------------------------------- | ---------------------------------------------------------------------------------------------------------------------- | -------------------------------------------------------------------------------------------------------- | | Framework Structure | Situation, Task, Action, Result (STAR) | Situation, Task, Action, Result, and Thinking/Transferable Skills (START / STAR-AI) | Adding a second "T" to further enhance behavioral interviewing frameworks. | | Action / Task Description | Generic: "Managed social media accounts. Assisted with customer inquiries." | Enhanced: "Developed and executed a comprehensive social media strategy across LinkedIn and Twitter." | Demonstrating Before & After Transformations using STAR and AI refinement. | | Result / Impact | Assesses candidates' past behaviors as predictors of future performance. | Resulting in a 40% increase in brand engagement. Resolved 150+ complex issues weekly with a 95% first-call resolution. | Showcasing concrete, quantifiable outcomes and supporting transparent AI auditing. | | Drafting & Ideation | - | AI output provides a "great head start" on crafting deliverables like documentation or letters. | Focusing on how to effectively specify the deliverable in initial prompts. | | Human Refinement | - | Adding appropriate business information and modifying the output in own tone and language. | Conveying technical details to non-technical stakeholders to drive product success in a timelier manner. |

The STAR-AI method explicitly requires the candidate to highlight their 'Refinement' capabilities, proving that they supervise the AI rather than relying on it blindly.

Section 4: Navigating Algorithmic Gatekeepers: Paradox and HireVue

The recruitment pipeline itself is now heavily mediated and guarded by artificial intelligence. By 2026, the overwhelming majority of Fortune 500 corporations, global financial institutions, and major consulting firms utilize automated screening technologies to efficiently manage massive global applicant volumes. Platforms such as HireVue, which facilitates asynchronous video interviews and psychometric assessments, and Paradox, which conducts conversational text-based screening, serve as the primary gatekeepers. Non-technical candidates must possess a fundamental understanding of the underlying mechanics, psychological evaluation criteria, and compliance guardrails of these automated platforms to successfully navigate them.

The Conversational Gatekeeper: Paradox AI

Paradox operates primarily as an automated conversational assistant—often humanized with names like "Olivia"—that interacts with prospective candidates via SMS, WhatsApp, or dedicated web chat interfaces. Unlike the rudimentary, rule-based chatbots of previous decades that required users to select from static menus, Paradox utilizes highly advanced Natural Language Processing (NLP) to dynamically parse candidate intent, extract relevant experiential skills from highly informal text, and autonomously schedule subsequent human interviews if the candidate meets the algorithmic threshold.

Successfully navigating a Paradox interview requires specific tactical communication. Because the system relies heavily on NLP to extract meaning, candidates must ensure a high keyword density within their conversational dialogue. When prompted with a seemingly casual text message such as, "Tell me a little bit about your recent experience," candidates must resist the urge to reply with standard conversational pleasantries. Instead, they should reply with highly structured, keyword-rich statements. A strategically optimized response would read: "In my most recent role, I successfully utilized agentic workflows to automate preliminary data collection, which was immediately followed by a strict human-in-the-loop verification process to ensure total compliance and accuracy." Furthermore, NLP models inherently favor structural clarity over linguistic complexity. Candidates must actively avoid convoluted sentence structures, passive voice, or the heavy use of industry slang. The most effective strategy involves utilizing declarative, direct statements that seamlessly and explicitly map back to the specific requirements listed in the job description.

The Asynchronous Evaluator: HireVue

HireVue represents a significantly more complex evaluative hurdle. The platform presents candidates with a series of pre-recorded video prompts and records their timed responses. While older, more primitive iterations of HireVue faced intense public backlash and regulatory scrutiny for attempting to analyze facial micro-expressions and vocal intonations—a practice now largely abandoned due to extreme bias concerns and legal pressure—the 2026 iteration operates entirely differently. Modern HireVue systems focus almost exclusively on advanced transcript analysis and sophisticated competency mapping, driven by proprietary, highly regulated, and securely locked LLMs.

A critical component of understanding HireVue is recognizing its compliance architecture. To comply with anti-bias regulations, HireVue utilizes "deterministic" AI. This means the algorithms evaluating the candidate are entirely static; they do not learn, adapt, or change their scoring rubrics on the fly during the interview. They evaluate the transcribed audio text against a strictly validated, scientifically established benchmark of top organizational performers, designed and locked by Industrial-Organizational (IO) Psychologists. Candidates are scored on structured psychological competencies such as complex problem-solving, behavioral adaptability, and increasingly, comprehensive AI literacy.

Automated interviewers are deliberately programmed to ask complex situational questions meticulously designed to expose candidates who lack critical thinking skills regarding artificial intelligence implementation. Candidates can expect targeted prompts specifically engineered to assess their understanding of technological limitations and risk management.

The Hallucination Assessment A standard algorithmic prompt often takes the form of: "Tell me about a specific time an AI tool or automated system provided you with incorrect, biased, or misleading information. How did you identify the error, and what exact steps did you take to correct it?". The evaluating algorithm is specifically listening for linguistic markers indicating personal accountability and rigorous verification. Candidates must employ the STAR-AI method here, explicitly utilizing terms such as "cross-referenced," "independently verified," "routine audit," and "factual inconsistency." The response must thoroughly describe the manual human verification process that successfully caught the algorithmic error before it caused organizational harm.

The Bias and Ethical Governance Assessment Candidates frequently encounter prompts such as: "Describe a complex situation where you had to ensure a project or corporate communication was entirely inclusive and unbiased, despite utilizing automated tools to generate the baseline content". In this scenario, the algorithm is evaluating the candidate's capacity for ethical governance. A successful answer discusses the inherent systemic biases present in the training data of large language models. The candidate must clearly explain how they proactively implemented a structured "ethical oversight protocol" to meticulously review all synthetically generated output for absolute demographic neutrality prior to publication.

The Productivity versus Quality Assessment Finally, the system tests operational judgment with questions like: "How do you actively balance the immense speed of using modern AI tools with the critical need to maintain high quality, accuracy, and highly personalized outputs?". The deterministic algorithm is searching for concepts related to the human acting as a "force multiplier." Candidates should explain the strategic philosophy of the "ten percent solution"—utilizing AI exclusively for the lowest-value, most repetitive tasks, which typically constitute the initial ten percent of project effort. This strategic delegation actively frees up vital cognitive bandwidth for high-value strategic refinement, empathy injection, and quality assurance, which constitute the remaining ninety percent of the work.

| Assessment Category | HireVue/Paradox Intent | Algorithmic Keyword Targets | Optimal Narrative Strategy |

|---|---|---|---|

| Hallucination Testing | Assess candidate's ability to catch machine fabrication. | Cross-referenced, verified, audited, factual inconsistency, override. | Detail a specific instance of tracing an AI claim to its source and finding a flaw. |

| Bias & Ethics Testing | Evaluate understanding of systemic data bias and inclusive practices. | Demographic neutrality, inclusive, ethical oversight, systemic bias, compliance. | Discuss the implementation of a manual review protocol focused purely on demographic fairness. |

| Productivity Balance | Determine if the candidate sacrifices quality for algorithmic speed. | Force multiplier, strategic refinement, cognitive bandwidth, high-value tasks. | Explain the delegation of rote tasks to AI to reserve human energy for complex strategic thinking. |

Section 5: LinkedIn "Social Proof" and the Death of the Standard Application

In the highly saturated 2026 job market, relying solely on an Applicant Tracking System to parse a submitted resume is considered an exceptionally low-probability career strategy. Recruiters, facing an onslaught of AI-generated, perfectly formatted applications, now actively bypass standard inboxes to hunt for "Proof of Work" (PoW). Proof of Work refers to publicly verifiable, tangible demonstrations of professional competence. For a software engineer, this traditionally takes the form of a complex GitHub repository. For a non-technical graduate, the equivalent is a highly optimized, content-rich LinkedIn presence.

The standard, traditional graduate post—typically reading, "I am thrilled to announce I have graduated from XYZ University and am currently looking for an entry-level role in communications!"—generates absolutely zero algorithmic traction or recruiter interest because it provides zero evidence of corporate utility. Instead, candidates must strategically position themselves as active, capable problem solvers who continuously leverage modern tools to generate value.

The architecture of a high-engagement LinkedIn post in 2026 follows a highly specific narrative arc designed to demonstrate the transition from "Software 2.0" (human-coded rules) to "Software 3.0" (autonomous, AI-driven systems). The post must contain a bold Hook that challenges an assumption, a detailed breakdown of the Architecture showing how a problem was approached, a clear explanation of the Execution detailing the exact blend of AI and human effort, and finally, the Result showing quantifiable business impact. These posts never explicitly ask for jobs; they demonstrate undeniable value, thereby prompting recruiters to initiate inbound outreach.

Three "Proof of Work" LinkedIn Post Templates for Non-Technical Graduates

To successfully attract inbound recruiter interest, candidates should utilize the following structured content templates, adapting them to their specific domain expertise.

| Template Concept | The Narrative Hook | The Execution Architecture | The Result & Call to Action |

|---|---|---|---|

| 1. The Workflow Teardown (Focuses on Agentic Efficiency) | "Everyone claims AI will replace junior marketers. I spent the weekend building an automated workflow that proves AI doesn't replace us; it simply makes us 5x faster. Here is a teardown of how I automated my entire competitive analysis process." | "Traditionally, compiling competitive sentiment takes 10 hours. I built a 3-step pipeline: 1. RSS scraper pulls competitor blogs. 2. Claude 3 extracts core themes. 3. (Human Element) I synthesized these raw scores into a strategic narrative detailing why they made these moves." | "Time spent: 2 hours. Value delivered: Executive-ready strategy. The future belongs to those who orchestrate tools. If you need a marketing leader who builds workflows, let's connect." |

| 2. Catching the Hallucination (Focuses on Risk Management) | "An enterprise AI just tried to lie to me in a major research report. Here is exactly how I caught it, and why strict 'Human-in-the-Loop' verification is the single most important non-technical skill in 2026." | "While generating background research on consumer trends, the LLM confidently cited a 40% market drop. Instead of copying it, I ran my verification protocol. I traced the claim back and realized the AI had completely hallucinated the number by conflating unrelated datasets." | "AI is an incredible drafting tool, but a terrible final editor. Companies deploying AI without fact-checking are playing with fire. If you need a specialist who guarantees factual integrity, my DMs are open." |

| 3. The Prompt Library Showcase (Focuses on Scalability) | "I grew tired of writing the same press release outlines from scratch. So, I built a comprehensive 'Prompt Library' that anyone in my network can freely use." | "I refined 15 highly specific, constraint-bound prompts for B2B communications. These aren't generic. Each includes strict variables for brand tone, negative constraints (e.g., 'no jargon'), and a required output structure." | "By systematizing the drafting phase, I cut writing time by 60%. I now spend that time on storytelling and emotional resonance. Builders build systems. If your team needs to scale content responsibly, let's talk." |

Section 6: The Resume Makeover: Transitioning to the AI-Augmented Specialist

The final, critical step in the career preparation process requires translating this comprehensive conceptual framework directly into the candidate's resume. The overarching goal is to completely transform a static, task-based, historical document into a highly dynamic, impact-driven manifesto that unmistakably signals the candidate is an "AI-Augmented Professional." Standard resumes that simply list daily duties are heavily penalized by modern parsing algorithms.

This section provides a detailed, comparative breakdown, demonstrating exactly how to transform a generic "B.A. in Communications" profile into a highly competitive, algorithmic-friendly "AI-Augmented Communications Specialist".

Restructuring the Professional Summary

The professional summary located at the top of the document must immediately and aggressively establish the candidate as an organizational force multiplier who inherently understands business value and scalability, not just an entry-level worker seeking repetitive tasks.

A traditional, generic summary typically reads: "Recent Communications graduate with a passion for writing, social media management, and creative storytelling. Highly organized team player looking for an entry-level role to learn and grow within a dynamic marketing department." This approach is entirely passive; it focuses entirely on what the candidate wants to extract from the company ("to learn and grow") rather than what operational value the candidate will inject into the business.

An AI-Augmented summary completely reframes the narrative: "AI-Augmented Communications Specialist leveraging advanced LLM workflows and proprietary prompt engineering to exponentially scale content production. Proven ability to combine rapid AI drafting capabilities with rigorous human-in-the-loop verification protocols to ensure absolute brand compliance, emotional resonance, and factual integrity. Seeking to bring agentic workflow optimization and data-driven storytelling to a forward-thinking communications team.". This revised summary aggressively highlights modern capabilities, directly addresses enterprise anxieties regarding quality control, and utilizes extremely high-value ATS keywords.

Transforming the Experience and Projects Section

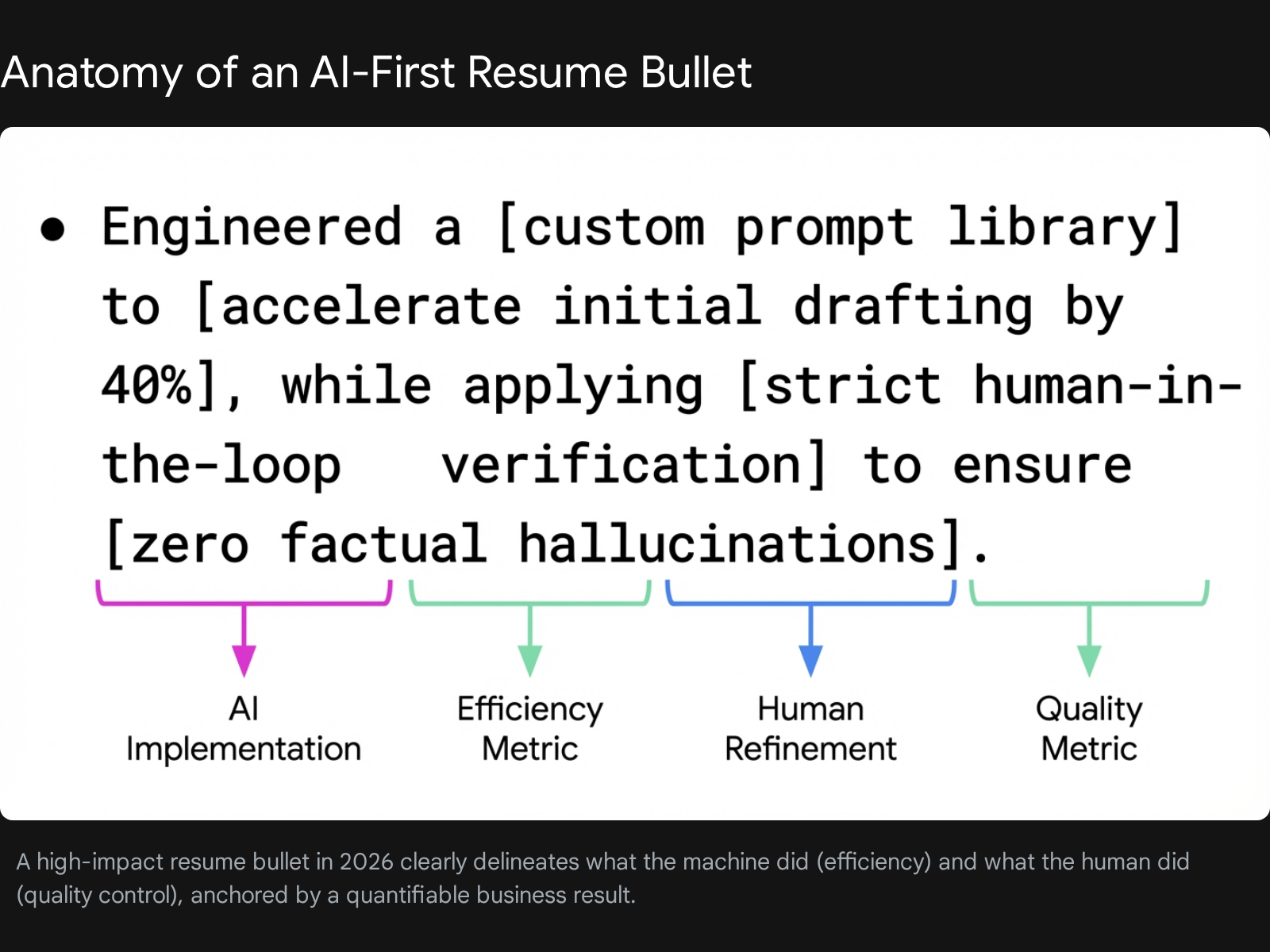

A common obstacle for recent graduates is a lack of formal, multi-year corporate experience. Therefore, rigorous academic projects, relevant internships, or independent "Proof of Work" portfolio pieces must be formatted and treated with the exact same gravity as professional engagements. Every single bullet point must be deeply quantified and strictly adhere to the "AI Tool + Human Refinement = Business Result" structure mandated by the STAR-AI framework.

To successfully transform experience bullets, a candidate must shift from documenting tasks to documenting systems.

| Resume Component | Traditional, Task-Based Formatting | Modern, AI-Augmented Formatting |

|---|---|---|

| Bullet 1 (Focus: Efficiency) | Managed social media accounts for the university debate club, posting three times per week. | Architected an automated content pipeline utilizing custom RSS scrapers and Claude 3.5, reducing weekly social media drafting time by 60% while increasing posting frequency. |

| Bullet 2 (Focus: Scalability) | Wrote press releases, newsletters, and blog posts for upcoming events. | Developed and maintained a centralized library of 20+ constraint-optimized prompts, ensuring consistent club branding and tone across multiple student contributors. |

| Bullet 3 (Focus: Quality Control) | Proofread and edited marketing materials before they were published online. | Served as the final 'Human-in-the-Loop' editor, meticulously auditing AI-generated press releases for factual accuracy, bias mitigation, and emotional resonance. |

| Bullet 4 (Focus: Data Privacy) | Assisted with market research to figure out what students wanted to see at events. | Generated synthetic audience personas using ChatGPT to simulate and A/B test marketing angles prior to campaign launches, optimizing event attendance. |

Rebuilding the Skills Section

The foundational skills section located at the bottom of the document must be strictly bifurcated to display comprehensive literacy. Traditional "soft skills" such as generic teamwork, basic public speaking, and standard organization are essentially assumed by employers and waste highly valuable ATS parsing space. The physical space on the page must be entirely reserved for high-value technical and augmented competencies.

Instead of listing "Microsoft Office, Public Speaking, Writing, Teamwork, Social Media, Research," the modern graduate must categorize their expertise. Under "Core Competencies," they should list high-level operational skills such as AI Output Verification, Human-in-the-Loop (HITL) Validation, Hallucination Mitigation, Brand Voice Architecture, and Strategic Storytelling. Directly beneath this, under a category titled "Technical & AI Ecosystems," the candidate must list the specific tools they orchestrate, such as LLM Ecosystems (GPT-4o, Claude 3 Opus, Gemini Advanced), Prompt Library Management platforms (PromptHub, Notion AI), Workflow Automation integrators (Zapier, Make), and Conversational AI Scripting environments. This structure proves to the parsing algorithm and the human reviewer that the candidate represents a fully modernized professional.

Conclusion: The Ultimate Adaptive Advantage

The 2026 labor market has irrevocably and permanently shifted the baseline requirements of entry-level employment. The traditional university degree, while still maintaining its status as a foundational marker of sustained academic effort and basic cognitive capability, is no longer sufficient as a standalone credential to guarantee corporate employment. As enterprise organizations aggressively integrate complex artificial intelligence systems into every facet of their daily operations, they do not merely seek human operators; they actively seek systems orchestrators, data verifiers, and ethical guardians.

For the non-technical graduate, this immense technological paradigm shift should not be viewed as an existential threat to white-collar employment, but rather as an unprecedented, highly lucrative opportunity. By strategically reframing their professional profile from a passive recipient of manual tasks to an active, highly capable manager of AI-augmented workflows, candidates can entirely bypass the traditional, grinding entry-level hierarchy. Mastering the specific vocabulary of AI integration, utilizing the sophisticated STAR-AI method to defeat algorithmic interviews, establishing undeniable proof of work on digital platforms like LinkedIn, and meticulously overhauling the structural anatomy of the resume will ensure that a candidate is never viewed as a biological worker easily automated by software. Instead, they will be recognized and compensated for what they truly represent in the modern enterprise environment: an utterly indispensable human force multiplier. Ignorance of artificial intelligence is ultimately a choice, but professional adaptability is a required strategy—and in the highly automated landscape of 2026, adaptability remains the ultimate, unrivaled qualification.