Anthropic upgrades Claude agents with memory self-review and subagents

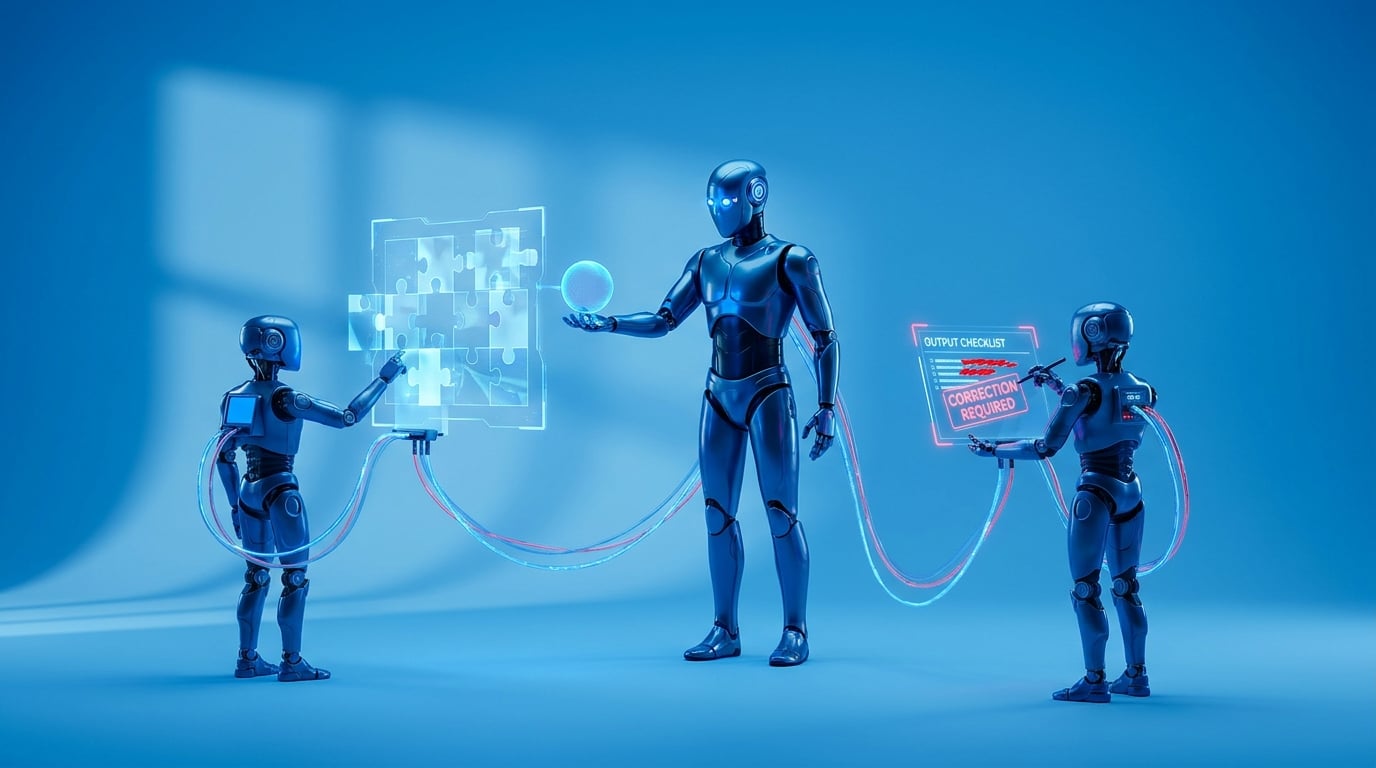

Anthropic has upgraded Claude Managed Agents with Dreaming, a feature where agents review past sessions to build memories and improve over time. They now include Outcomes, which let agents check their work against defined quality rules and fix errors. Lead agents can also hand off tasks to specialist subagents that run in parallel. These updates lift success rates on tough tasks by up to 10 percentage points. Legal firm Harvey reports six times higher completion rates. Healthcare processor Wisedocs has halved its review time on documents. Dreaming remains in research preview, needing access requests, while the others enter public beta.

Before, AI agents often lost track midway through long jobs, forcing managers to restart prompts or act as constant quality checkers. This bred prompt fatigue and hours lost to fixing vague or forgotten outputs in workflows. Now agents build persistent memories and self-assess against your rules, while splitting work across specialists. For the first time, complex ops like document analysis run reliably without your daily babysitting, turning chaotic tools into steady async engines.

Analysis

This shores up Claude's reliability just enough to encode your expertise into hands-off workflows, dodging the junior leapfrog without hype-fueled overcommitment. Skip scattered Zapier hacks; today in Claude Workspace, build a lead agent with two subagents for your next report review, request Dreaming access, and log if it cuts your QA time by half.

Citation

This executive briefing was curated and analyzed by Collab365. To reference this analysis, please attribute: "This briefing is available on Collab365 Spaces (spaces.collab365.com)".